Tutorial Details

Interactive information retrieval systems are increasingly central to user experiences. However, rigorously evaluating their performance, particularly as interactions become highly personalized, remains a scientific challenge. User simulation, which employs intelligent agents to mimic human interaction patterns, offers a powerful methodology to tackle these challenges. This half-day tutorial provides a guided tour of simulation techniques for interactive information retrieval, spanning foundational statistical approaches through advanced LLM-driven frameworks. Through three hands-on segments, participants will implement foundational simulators, work with modern toolkits like SimIIR and IIRSim Studio, and learn to evaluate simulator fidelity against real user behavior.

Objectives

Understand the principles and use cases for user simulation in interactive information retrieval.

Implement foundational simulators using click models and Markov chains.

Apply modern toolkits such as SimIIR and IIRSim Studio for high-fidelity simulation experiments and LLM-based query reformulations.

Evaluate simulator fidelity using metrics for comparing simulated behavior to real user logs.

Target Audience and Prerequisites

Graduate students, academic researchers, and industry practitioners, particularly those with qualitative and user-focused research backgrounds. Basic programming familiarity with Python and Jupyter notebooks is advantageous; all code will be provided with hands-on assistance available.

Scope and Outline

- Part 1: Foundational Simulation [60 min]

Covers the motivation and rationale for user simulation, along with simple interaction models including click models and Markov chains. Participants implement a basic simulator to validate an interactive search feature.

- Part 2: Advanced Simulation Frameworks [75 min]

Examines modern toolkit architectures and incorporating user data. Participants work with SimIIR and IIRSim Studio for high-fidelity simulation experiments and LLM-based query reformulations.

- Part 3: Evaluating Simulations [45 min]

Addresses the importance of simulator validation, covering metrics for comparing simulated behavior to real user logs, and qualitative vs. quantitative assessment approaches.

Presenters

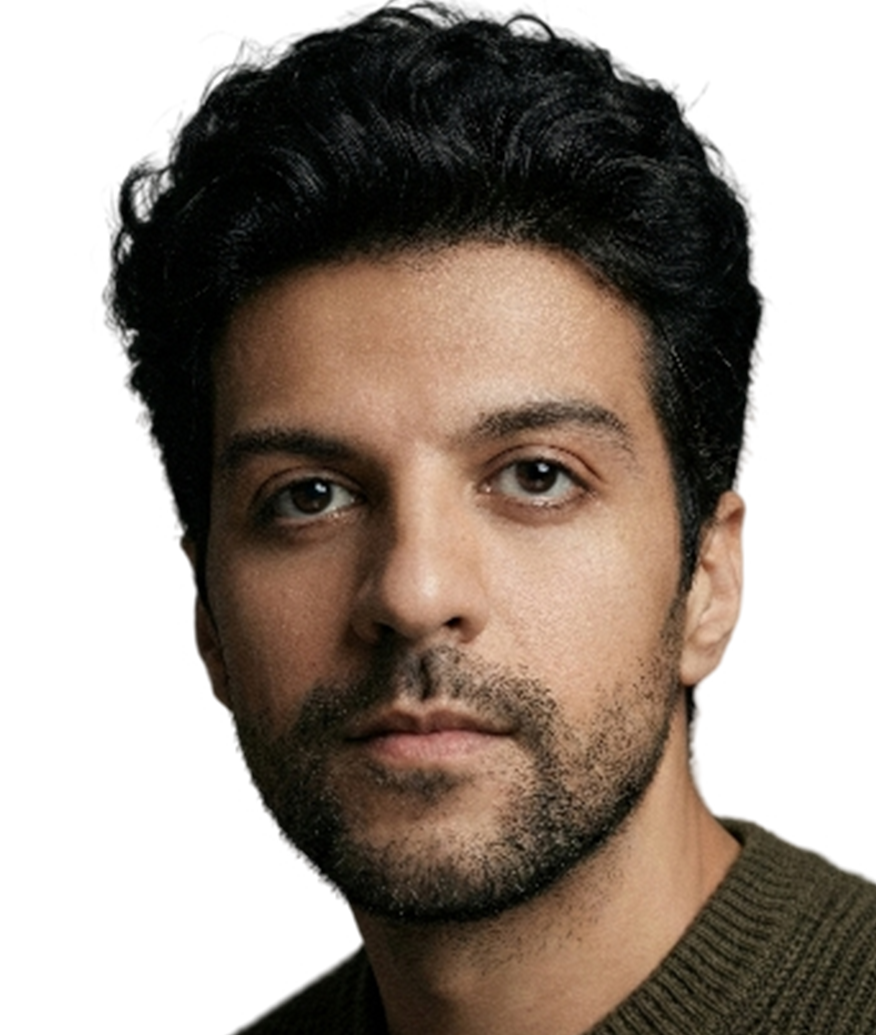

Saber Zerhoudi is a postdoctoral researcher at the University of Passau, Germany. His research focuses on simulating and evaluating user search behavior with interactive information retrieval systems, with applications extending to digital libraries contexts. He has published his work at conferences including SIGIR, CIKM, ECIR, CHIIR, JCDL, and SIGIR-AP. He is one of the main authors behind the SimIIR toolkits (SimIIRv2 and SimIIRv3).

Adam Roegiest is VP of Research and Technology at Zuva. His research interests span information retrieval, natural language processing, and the application of simulation techniques to real-world systems.

Johanne Trippas is a Vice-Chancellor's Senior Research Fellow at RMIT University. Her research focuses on conversational information access, spoken information retrieval, and human-computer interaction.